Optum AI

Overview

At Optum AI, I work on healthcare machine learning systems that bridge research and production. My role spans model development, evaluation design, stakeholder alignment, and translation of AI capabilities into tools that support healthcare delivery. Much of my recent work has centered on longitudinal healthcare modeling, clinician-facing generative AI, and the evaluation frameworks needed to assess these systems responsibly.

One of the best parts of the work is partnering closely with the broader team across research, engineering, product, and clinical stakeholders.

Healthcare Foundation Model Work

One of the most meaningful projects I have worked on at Optum AI has been a foundational model for longitudinal care prediction. The underlying idea was to treat a member’s care history as a sequence modeling problem. Instead of words in a sentence, the sequence is composed of healthcare service codes. By retraining an autoregressive Transformer architecture on a custom healthcare vocabulary, we built a model that can learn patterns in how care unfolds over time and use those patterns to predict what services may be relevant next.

This work became the core engine behind a production recommendation experience. In practice, the model helps surface care-path suggestions that are contextually related to what a member is currently exploring and what similar members have historically used in their plan journey.

The public Optum / UnitedHealthcare press coverage for this product experience can be found here:

How It Works in Product

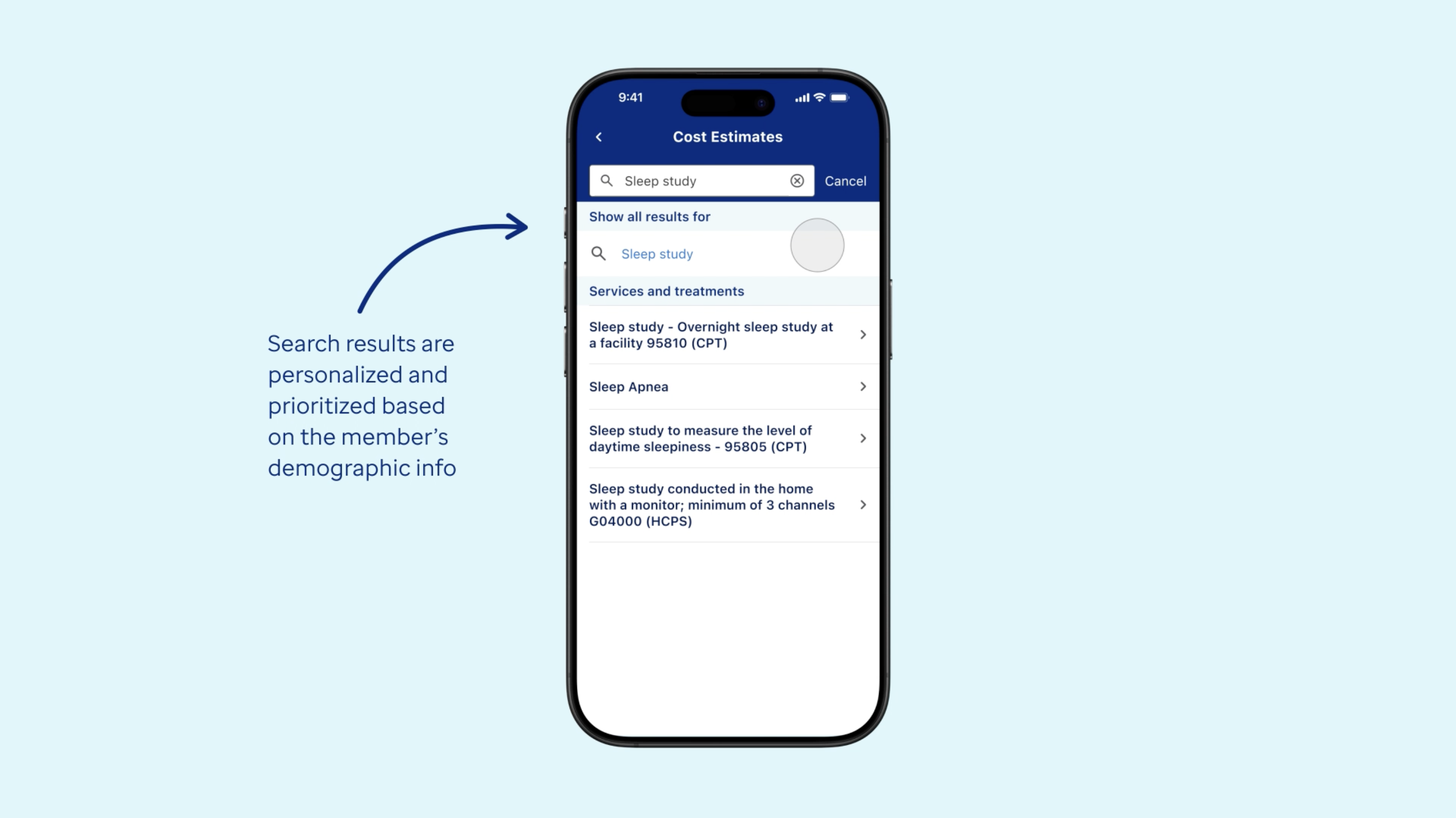

The screenshots below show one example of how the recommendation flow appears in the deployed mobile experience.

A member begins by searching for a service. In this example, the search is for a sleep study, and the ranked results include options related to sleep apnea and overnight sleep studies.

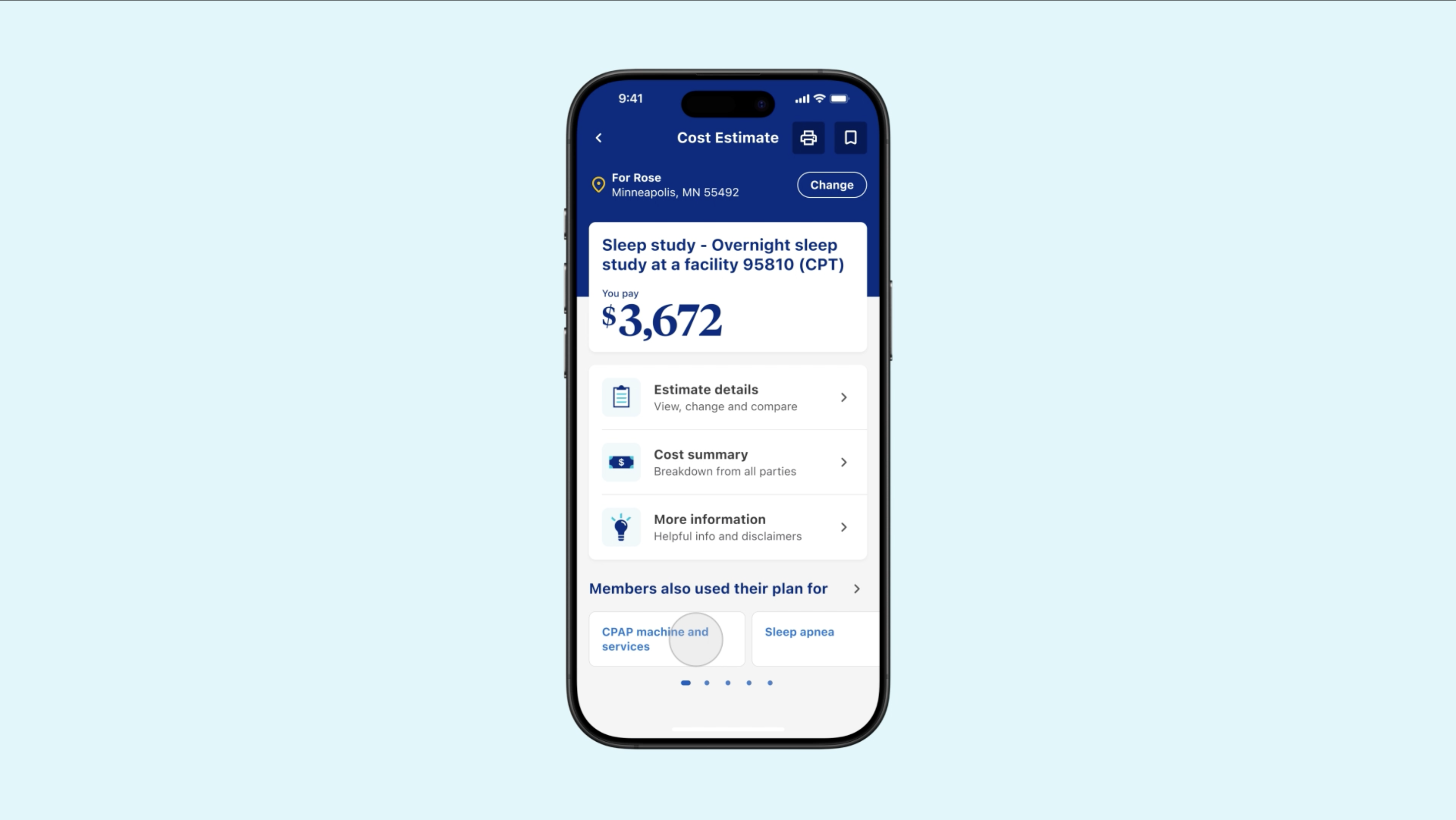

Once the member selects a relevant result such as Sleep apnea or a related sleep study option, they arrive at the cost estimate page. From there, the product can surface a section called Members also used their plan for. That recommendation strip is where my model is serving personalized adjacent suggestions based on learned patterns in longitudinal care sequences.

After the member lands on the service page, the recommendation shelf appears under Members also used their plan for. In this example, the model surfaces related care-path options such as CPAP machine and services and Sleep apnea.

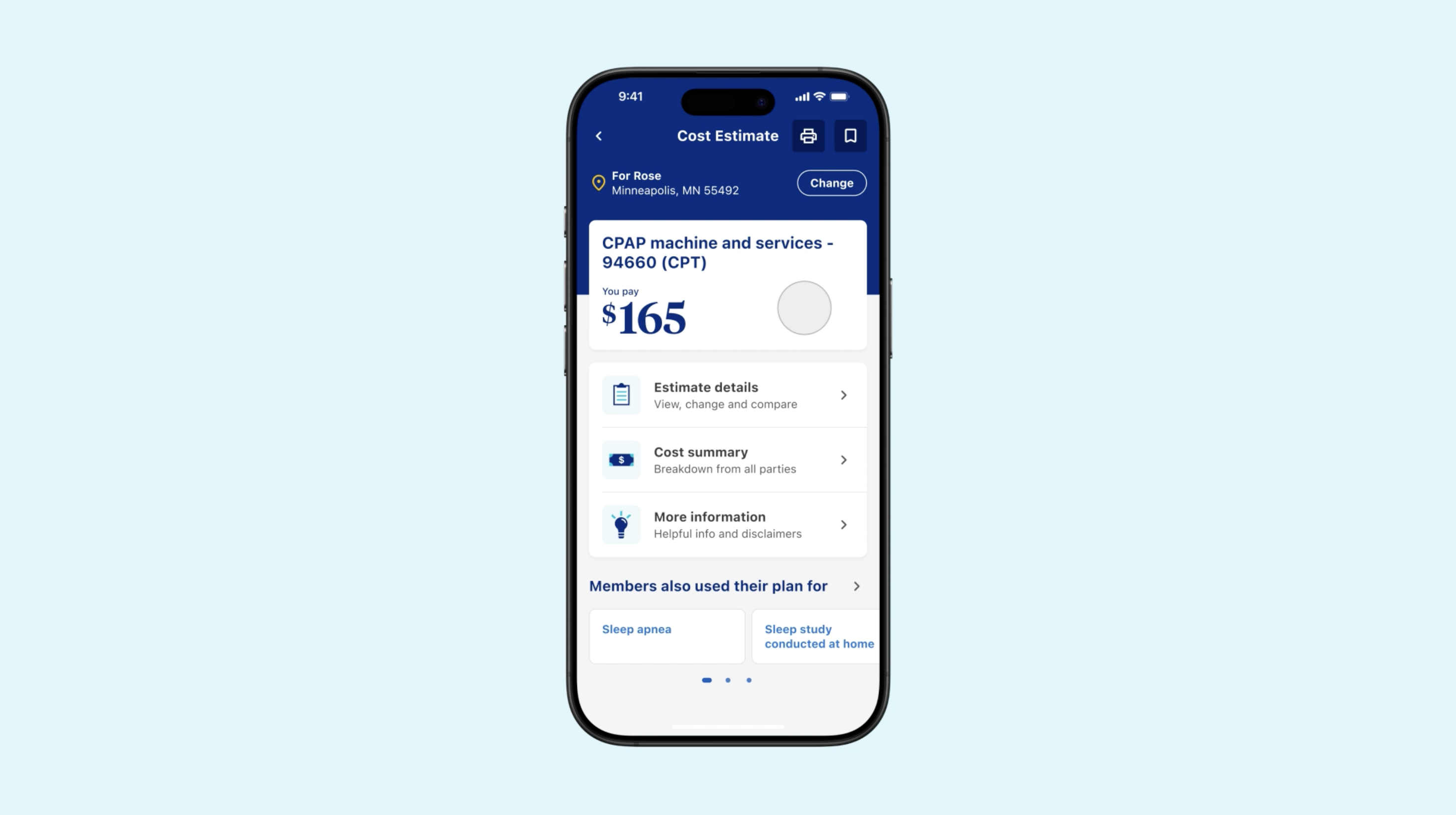

If the member taps one of those suggestions, such as CPAP machine and services, they are taken directly to a related cost estimate experience for that downstream service.

This is the downstream recommendation in action: the member selects a related suggestion and moves into another relevant estimate and planning experience.

For me, this project is a strong example of what makes health AI exciting: a relatively abstract machine learning idea can be turned into a concrete member-facing feature that improves navigation, supports decision making, and operates within a real healthcare product.

Other Areas of Focus

- Clinician-facing generative AI: I have also contributed to multiple healthcare AI solutions that generate medical-record-grounded text for clinician-in-the-loop workflows, with a focus on usefulness, quality, and safe human oversight.

- Evaluation and measurement: I built evaluation workflows that combine statistical natural language processing metrics with LLM-based judging strategies to better assess generated outputs in health AI settings.

- Responsible AI review: I developed tooling to support internal audit and review teams in triaging pre-deployment AI systems against policy, best practices, and historical review patterns.

What I Enjoy Most

One of the most rewarding parts of the work is operating at the boundary between technical depth and real-world constraints. Healthcare AI is not just about building a strong model. It also requires clear communication, careful validation, and close partnership with clinicians, product leaders, and business stakeholders. I enjoy helping different domain experts get aligned on what a system is doing, how it should be evaluated, and what responsible deployment should look like.